Unpredictable Patterns #23: Being wrong - better

On the danger of labeling mistakes as biases, operating in failure mode, being wrong and errorology as strategy

Dear reader,

We must declare summer. The weather has been brilliant and I am sitting outside writing this, really enjoying the luxury of writing and drinking coffee outside. The pandemic is still real, but at a distance we can now reassemble parts of normality and explore what life will look like after the pandemic. What new values have we found and are there ideas that we have finally left behind? Or will it all fade like a bad dream? I don’t think so. I do think that we will see subtle shifts in value systems and other deep social patterns - but I am often wrong. And that is the subject of today’s note - being wrong, in new and better ways.

Errorology

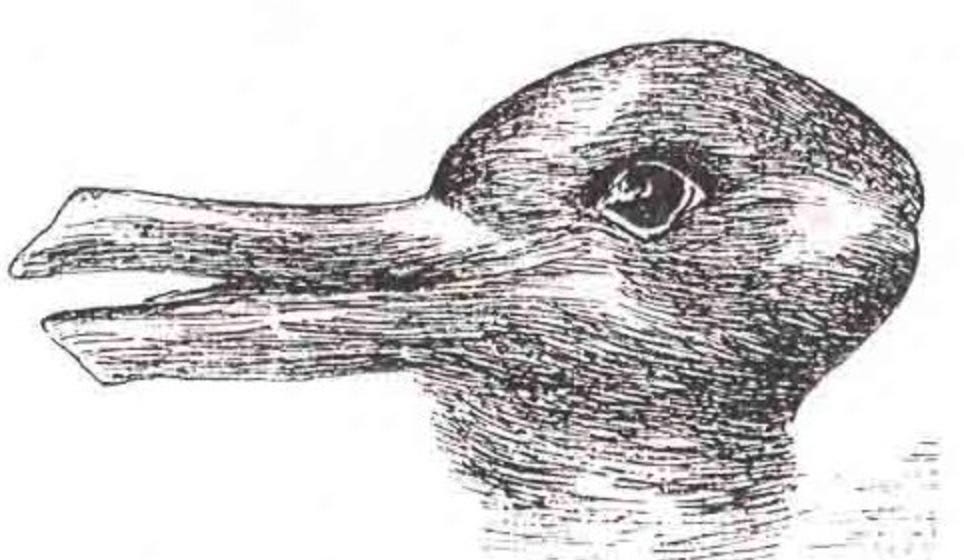

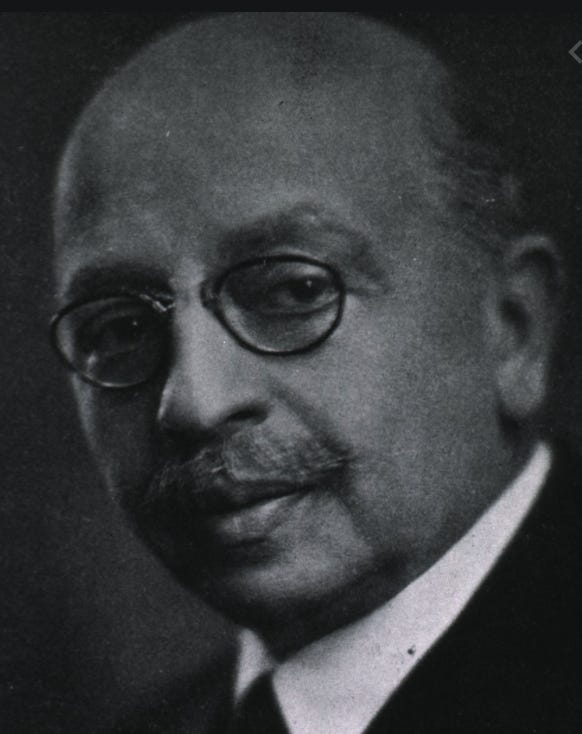

If we have trial, we will have error. The study of error can proceed in many different ways - one is the study of the different ways in which we end up in error, and this is what we find in for example Francis Bacon’s famous idols of the tribe, theater, cave and forum - updated by the now sadly neglected psychologist and thinker Jospeh Jastrow as the idols of the mind - idols of the self, thrill and web - and the idols of society - idols of the mass, mold and cult.

Jastrow’s deep interest in error led him to classify, early on, the roots of error as the following:

The self projects the subjective on nature, the thrill is the choice of the romantic and exciting over the mundane, the web is the connection of data that is at most showing us a spurious correlation.

In society we are deluded into thinking what the mass thinks, we get stuck in our own mold and class and ultimately resort to the cult, the myths and dogmas of our time in explaining what we cannot organize into our current model of the world.

Jastrow noted that the mind is often and easily led astray and that the knowledge we claim as self-evident today was produced by a lot of blundering. The study of this blundering he called ”errorology” and he asserted that understanding our errors is as important as understanding the logic of science and the deepening of our knowledge overall.

Jastrow edited a volume called The Story of Human Error that explores and examines errors in different branches of science - working with collaborators to carefully examine how we make errors.

In our time this project has expanded into a general examination of the idea of rational man. We have discovered what we think of as “biases” and in addition to this now know that a lot of what we ascribe to merit really should be ascribed to mere luck. We have been revealed as rational frauds, in a sense, and this has led us to mistrust our own judgment in many ways.

Errorology, thus, slides into a curious version of misanthropy where we seem to find some humbling joy in discovering mistakes and shortcomings in the human mind as it is compared with a yardstick of abstract - almost machine - rationality.

Jastrow would have been sympathetic to this project, and suggested that the book he wrote might have been called ”Our misbehaving minds” - and this is what we are currently being told our minds do - they misbehave when they should be rational and scientific.

I don’t think that is true, and, furthermore, I think that often means that we focus on the wrong ways in which we are wrong.

Bias or tools handed down by evolution?

The better way to think about biases is probably to treat them as evolutionary patterns of thinking that serve to economize the energy and resources used for solving real natural problems as opposed to the artificial problems that we often use when we want to show that there is deep bias and flaws in our thinking.

One of Tversky’s and Kahneman’s examples, the one about Linda the Bankteller, gives us a really interesting example - that I have discussed elsewhere, but is worth repeating.

In the experiment we are told that Linda studies in Berkeley (famously progressive political school) and she is studying economics. We are told she is active in student politics and that she cares about a lot of different causes. We are then asked to assess the probability of the following two statements.

(i) Linda is a bankteller

(ii) Linda is a bankteller and a feminist.

Many people assess (ii) as more probable, even though - as behavioral economists gleefully point out - this is mathematically impossible. A compound probability a and b is always less probable than a single probability a — this is trivial probability theory, and yet people get it wrong, or so the experiment says.

But is this really a mistake?

What is it that is going on here? Mervyn King and John Kay suggests in their book Radical Uncertainty that people do not understand the world in propositions, but rather in narratives and so they real mistake is Tversky’s and Kahneman’s. The idea that we can divide the world into singular propositions and that the individual proposition is the best unit of probability analysis is simply a mistake born from our over-reliance on the mental model of propositions as the foundation of knowledge.

There is, in fact, no evidence that we understand the world as a set of discrete propositions at all. We understand the world as narrative, and evolution has taught us narrative probability assessments, not propositional probability theory.

Narratives, or stories, are enormously efficient compression algorithms. They contain explanations of the world and ensure that everything sticks together. When we hear the story about Linda we expect that everything in the narrative makes sense and is important, and so why tell us about her engagement in student politics if it will not return in the logic of the narrative? As we then assess probability we look at the entire narrative component and assess the probability of the story we are being told.

When we approach probability we do it with poetics as the key tool for analysis, not mathematics. This is not a new insight, and if you read your Aristotle (always read your Aristotle) you will be familiar with the notion of dramatic probability as he uses it in his Poetics:

”It is clear from what has been said that the task of the poet is not to tell of what has happened, but what might happen, and what is possible according to probability or necessity”.

We evolved to understand the world poetically, because that is much more efficient than understanding the world scientifically - to a point. That means that we understand the world in narratives and that the rules of good fiction are better guides to our thinking than logic and mathematics.

The rules of fiction are clear: if you introduce a knife in the first part of a play, it needs to be used before the end of the play - and this is exactly why we also assess (ii) - that Linda is a bank teller and a feminist - as more likely than her just being a bank teller.

Dramatic probability beats out propositional probability.

But is this not just admitting the point that we are wrong when we assess (ii) as more probably than (i)? Interestingly this depends on the underlying order that we are trying to predict. If the world we are assessing is propositional with equally distributed probabilities, then yes — we are wrong. But if the world is ordered in a narrative way, then the mistake is to assume propositional probabilities.

And the human world *is* often ordered narratively - because we order it in that way, with our stories and the way we live our lives. That means that picking (ii) will more often be right than just picking (i) - if we by being right mean being able to continue the narrative or use the narrative correctly in predicting future events.

Being wrong, then, is about something much more interesting than assessing probabilities - it is about a mismatch between our concepts and the world. And the way we normally think about biases and rationality is a conflation between a concept of our intelligence as artificial and propositional as opposed to the natural and narrative intelligence evolved under millennia.

Being Wrong Better

Are all biases really cognitive strengths? Absolutely not, especially not the biases that underpin racism or gender inequality or generally destructive behaviors that evolved under completely different forms of life. But here is the thing - if we want to pay more attention to when we are wrong, we need to be very careful in classifying and understanding the kinds of wrong that we can be. If we end up being wrong about how we are wrong, well, then we will not learn as much as we hope, and I sincerely think that the popularity of the biases thinking risks leading to a situation where we classify our mistakes too fast, and neglect really examining them closely. We dismiss a mistake by saying that we fell for the availability heuristic and then move on with our lives.

Mistakes often have a much richer texture and are useful to examine not with templates, but as unique sources of insight. As a culture we are almost devaluing the ways in which we are wrong by reducing them to mere labels. Our mistakes should not be labeled as much as they should be explored and understood. This means that I think we need to really jettison all the biases lists and maps if we are serious about being wrong in better ways.

Being wrong in better ways is the topic of Kathryn Shultz excellent book Being Wrong: Adventures in the Margin of Error. This book lists and examines different ways in which we are wrong, how we are wrong and explores how we can get better at being wrong. Schultz shows that being wrong is almost physically painful and, to connect to our earlier discussion, we have not evolved to be wrong. If we want to be wrong better we need to actively not just practice individually, but build institutions that help us being wrong in ways that actually allow us to progress.

Because that is the prize we are looking for here; the ability to be wrong better is the key to learning more and deeper things about the world we are in.

If the first step of being wrong is to stop to resort to simple labelling of our mistakes, the second is to document when we were wrong and what we were wrong about. We can do this in two ways - before the fact and after the fact.

Annie Duke, professional poker player and author of Thinking in Bets, is an advocate for using a simple decision journal; looking at the decisions we make and documenting them. The best way to do this is to write down not just the decision, but also the premises on which we made the decision - what did we think was going on? Then, returning to the decision journal after the decision plays out, you will be able to see if you were correct or if you were wrong in part or in whole for that decision.

This is hard, for two reasons. First, we have already noted that we do not like being wrong so we tend to avoid acting in ways that can catch ourselves in the uncomfortable realization that we messed up. Second, in the pace of the everyday work we engage in, documenting decisions often is dismissed as useless bureaucracy. This view is essentially saying that you have committed to work in a way where you do not learn anything.

A decision journal, complete with assumptions, is a key tool of learning in any organization - or even in your personal life. Keeping a journal of decisions is a great way to also discover yourself; not just who you are, but what patterns of behavior you exhibit over time. This can be deeply sobering, but also provides the perhaps only way out of behaviors that are self-defeating.

The second way in which you can document where we went wrong is the post mortem. Tech companies typically run post mortems after incidents of different kinds - like security or reliability failures - and the rules of a good post mortem are not too hard to guess:

Blame is useless. A post mortem should find what went wrong, not who messed up. That someone could mess up was often the problem, not that they eventually did. Focusing on ”Human Error” makes you, as Sidney Dekker points out in his brilliant Field Guide to Understanding Human Error, focus on all the wrong things.

Go deep. Ask why until you discover root causes. The ”why-depth” of your post mortems will vary, but the 5 whys of the Toyota corporation is a good place to start.

Lay out the events in time. Do not just identify what went wrong - identify how it went wrong over time, and the points where it could have been fixed. We are not wrong at points in time, we slowly and methodically build our mistakes.

Experiment. Test solutions against the post mortem to see how it would have evolved and pay close attention to any new weaknesses and vulnerabilities you have now created. Assume you always create new problems when you solve old ones.

And so on.

The post mortem can be played in advance as a pre mortem, asking before a project is run how it will fail or back casting how it succeeded, and that is another way in which you can be virtually wrong.

Decision journals, post mortems and a focus on what has gone wrong need to be built into organizational culture, of course. This is perhaps the hardest bit, since it means that there needs to be ways of rewarding when people are wrong in better ways - something that seems difficult. But culture is what is rewarded and what is punished, and paying lip service to learning and failure will never be enough.

Ways of being wrong re-examined

I have argued that we should avoid labeling our mistakes as simple biases to quickly, but I do think that it is valuable to discuss the different ways in which we can be wrong. There is real value in Kahneman’s and Tversky’s work, of course, and the challenge is perhaps to approach error and mistakes with the same curiosity they did rather than think that error has been explained and hence can be readily excused. ”My biases made me do it” is not a good way to think about error, and obscures the larger lessons that we should draw.

If we look at broad categories of ways in which we often can be wrong, I think the following causes are sometimes undervalued.

Not updating beliefs often enough. The world changes, and one of the key insights from the great educator Hans Rosling was that most people believe the world looks like when they left the 9th grade. This was true for his nobel prize winning colleagues as well as for most of us. Check your baseline beliefs more often - it is a part of intellectual hygiene.

Having just one mental model. This is what Wittgenstein sometimes refers to as being held hostage by an image, that we have a model that is so visceral that we see it in our minds and it captivates us. The question of what images hold us hostage is a good one to ask frequently. If we try to articulate the image we have of something it usually gives us interesting insights into what we may be getting wrong.

Neglecting the small improvements. You do not need to look for big ways in which you are wrong, there is great value in small improvements as well - especially in the ones that compound over time and allow you to grow faster.

Assuming irrationality. Far too often we say - openly or to our selves - that others are being stupid. It is almost always a mistake to assume this, and you will be better off if you assume that others are acting on rational grounds.

Not asking enough questions. This is a key mistake that usually leads to us being wrong on things that we should have gotten right. Asking questions is never an admission of weakness, but rather a way to find the real problem.

Not stating assumptions clearly. This is related to both not asking questions and assuming irrationality. One of the most powerful ways to explore thinking in a group is to approach a decision by individually laying out what your assumptions are and then comparing notes.

Aspect blindness. Joseph Jastrow was interested not just in errorology, but he also was one of the first psychologists to identify aspect seeing in a study of the famous duck-rabbit. In many cases where we were wrong, we simply missed that the duck was also a rabbit! A version of this is system blindness, where we do not see the system we are operating in.

Making decisions that are too big or making decisions too rarely. There is sometimes a tendency to make really big decisions, because it feels as if they matter more. The best way to approach decision making is finding the smallest possible decision that improves your situation and gives you new information. Interestingly, one of the key insights from people who end up managing disasters is that you need to keep making decisions all the time — because making many decisions means trying many things, but that you should make small, if possible reversible, decisions. You can be wrong by not making decisions!

There are many other ways in which you can be wrong, of course, but these provide a few interesting starting points for thinking about what we can try to improve our decisions.

Living in landscapes of error

No discussion of error and being wrong would be complete without also thinking about how to live with error. The insight here is not dramatic - that making mistakes and being wrong is important is something we pay lip service to quite often. It is harder to practice, of course, but that is true for a lot of things. But how should we think about operating in a world where we are wrong most of the time?

One way to answer that question is to focus on the errors. As John Gall writes in his often surprising and funny book Systemantics: The System Bible:

”Error correction is what we do”

Gall addresses engineers, but there is some value in exploring how true this is and should be for us as well. After all, as managers, for example, what is the best perspective on our work? To create new bold initiatives or try to correct the errors in the projects and tasks that are already running? The second seems uninspiring and fundamentally depressing - but is it really?

Correcting errors is about focusing our efforts on our own position. Sun Zi - quoted to death by management writers and wanna-be strategists - is often read lazily, and people miss one of the most important statements he makes in the Art of War:

”Sun Tzu said: The good fighters of old first put themselves beyond the possibility of defeat, and then waited for an opportunity of defeating the enemy.

To secure ourselves against defeat lies in our own hands, but the opportunity of defeating the enemy is provided by the enemy himself.

Thus the good fighter is able to secure himself against defeat, but cannot make certain of defeating the enemy.”

Error correction is the best strategy. And this insight - that we far too often look for attacks rather than to build an unassailable position - is worth exploring deeper.

If go back to Gall, he also notes that all sufficiently complex systems operate in failure mode all the time, and that the failures they generate cannot be deduced by their structure - they go wrong all the time in different ways, but we can live in these systems by identifying the places where the fail systematically be observing rather than analyzing them. This is also true for understanding a strategic or political situation - if we understand where our opponents are wrong most often we will also understand how they think. What are the three last mistakes that your opponent made? How can these be understood as a part of their thinking style?

The abundance of mistakes and the fact that all of us are wrong much of the time suggests an insight about our operational environment, and also suggests that there is great value in seeing errors across society, organizations and individuals. Those errors define us, often much more than our successes do - and so the patient study of errors is perhaps the most undervalued way to get to know a market, company or individual.

Imagine a biography of a famous individual that laid out all the error she or he made rather than just tell the story of their successes - wouldn’t that be interesting?

Being right

Being wrong, being in error, making mistakes - we usually think about this as aberrations in our everyday actions and lives. The reality is that we probably are a lot like Gall’s systems: we operate in failure mode most of the time and we are wrong more than we are right if we calculate the overall sum of things we believe and do. When we speak about the success of Silicon Valley it is a stock component to speak of how tech companies embrace failure - fail faster, fail in new ways - and it is not hard to think that this should be a key to success. Castigating those who fail is essentially to dissuade learning.

But is it true? Does Silicon Valley embrace failure? Or is the reality that it embraces error correction? It is not the failures, but the ways in which you recover from them that matter, and so the way you navigate the error landscape is what differentiates the successful from the permanent failures.

Navigating error, being wrong better — that is the key to sustainable competitive advantage. Because that is how you end being something close to right.

Thank you for reading, and do spread the word if you find the newsletter interesting!

Nicklas