Unpredictable Patterns #154: The token race

How agentic states emerge, infinite regulatory attention, mutually assured depletion and the legitimacy of fully enforced laws

Dear reader,

Travel week, and I try to find some time to write in between trains and flights. This is written at the excellent TUM Think tank in a break, and addresses something I think will be an interesting area to think more about in the future; the token races enabling attention abundance as a means to exercise power.

Defensive AI uses

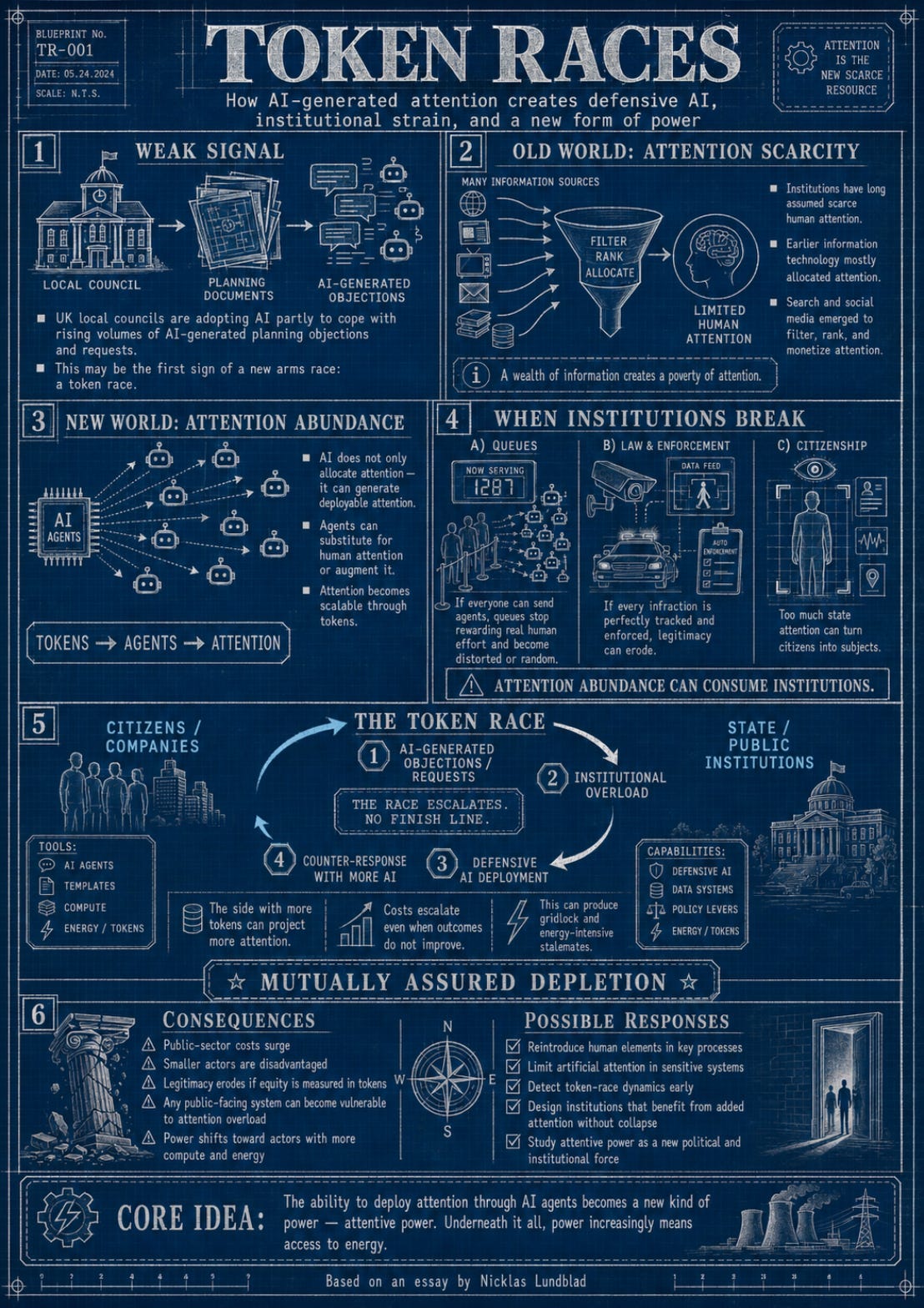

UK local councils recently announced that they are implementing AI for planning processes. This, in itself, is of course a natural step for any government to take: identify processes that can be supported by AI and then implement solutions that might help to create more robust public services. But one thing stood out in the reporting: the councils announced that one reason they were doing this was to respond to an increase in AI-generated AI-objections.1

This is a weak signal that deserves some attention: what we could be witnessing here is the first emergence of a new kind of arms race, a token race, where government is assailed by AI-generated citizen initiatives, objections and requests and so needs to respond in kind by making sure that they have the resources to respond in kind with their own AI-services.

If this is the case it suggests an interesting hypothesis about the agentic state: maybe it does not arise in the planned, careful and engineered way that has been imagined so far, with oversight and decisions made on the basis of robust evidence. Maybe the agentic state actually emerges as a defensive necessity, and will be shaped by the state’s need to respond to the massive amounts of attention that suddenly can be massed against all public service surfaces.

Here is the thing: most institutions are premised, in different ways, on a scarcity of attention. The fact that neither the state or the citizen has infinite attention has never had to be articulated as a premise, because it has been a natural precondition of any social relationship. This scarcity has, in fact, been the foundation of a lot of the technology we have developed in the last couple of decades: remember Herbert Simon’s famous observation in “Designing Organizations for an Information-Rich World”:2

Now when we speak of an information-rich world, we may expect, analogically, that the wealth of information means a dearth of something else—a scarcity of whatever it is that information consumes. What information consumes is rather obvious: it consumes the attention of its recipients. Hence a wealth of information creates a poverty of attention, and a need to allocate that attention efficiently among the overabundance of information sources that might consume it.

In this gap between a wealth of information and a poverty of attention we find a lot of the different business models that have characterized the early information technology revolution: both search and social networks are both responses to this, and both ended up struggling between allocating attention and monetizing attention in different ways.

Now, I don’t think this was wrong: the allocation of attention is hard, and required a lot of innovation and investment, and so the fact that the business model had to cover the costs for that is hardly concerning. The question, of course, is if there is a point where the equilibrium between reasonable monetization and allocative benefits breaks down.

The point I want to make, however, is different. If early information technology allocated attention, artificial intelligence does something different: it generates a new kind of attention that can be deployed in ways that substitute for or complement our own. Simon, whose 1969 talk ended up saying that we need to invest in artificial intelligence, only saw the complementary part and argued that we should dream about a world in which a difficult subject can be learned much faster, but what we see is there are also substitution uses, where human attention is no longer needed.

And that is where it gets complicated.

An abundance of attention and institutional failure

John Searle once noted that institutions are made of “collective intentionality” - a slightly more advanced version of attention. When we pay attention to money with the intention of having it be a store of value and a means of exchange it becomes exactly that. But one reason that this is the case is that we cannot pay attention to everything equally: if we had infinite amounts of attention, then institutions would weaken.

Most institutions are built on the premise of a scarcity of attention.

Take the simplest possible example: a queue. When new concert tickets are released, the concert organizer can announce that they will be released at a certain time and people will have to queue up for the tickets. The reason this works is because not everyone will have the time - attention - to do this. With agents, we can ask a million agents to queue for us, and then the idea of the queue devolves into random allocation on which agents happen to be first in some sense of the word.

Or take peer review. The institution rests on a particular asymmetry: it is harder to write a serious paper than to evaluate one, and reviewers — though unpaid and overworked — are at least not vastly outnumbered by the manuscripts they receive. Remove that asymmetry and the institution starts to wobble. If submitting becomes nearly free while reviewing remains expensively human, the queue at the gate grows until the gatekeepers either give up, automate their own attention in turn, or begin rejecting on heuristics that have less and less to do with the substance of the work. We already see early versions of this — journals overwhelmed, desk-rejection rates climbing, reviewers quietly running submissions through their own models. The peer review case is interesting because it inverts the council example: here it is not the institution that is being assailed by augmented citizens, but a community of experts being assailed by augmented peers. The token race is intramural. And yet the failure mode is the same — an institution premised on the scarcity of one kind of attention, eroded by the sudden abundance of another.

This holds for a lot of other instiutions as well: markets with infinite attention will at least be very different than those where actual people were shouting prices from the market floor.

We end up with the next iteration of Simon’s problem: what does attention consume? And the answer, perhaps surprisingly, is institutions.

The way institutions fail will be very different from case to case. The example of the concert ticket queue is a simple example of one failure mode: the function of the queue is degraded to the point where it no longer works at all for its intended purpose.

But an infinity of attention can also destroy institutions in other ways. Think about law. What of every single violation of every single law could be enforced through the projection of enforcement attention with the help of surveillance and artificial intelligence. What would that mean for the law? Arguably it would lose a lot of its legitimacy and we would chafe against the complete encroachment on our private sphere. We would miss the chance to sometimes just get away with it.

Getting away with it is not cheating, it is the experience that the system has gaps, and it is in these system gaps that institutional legitimacy is upheld. If the gaps become to large they render the institution meaningless, if they cease to exist the turn the institution unbearable.

Infinite state attention makes it impossible to be a citizen, it turns you into a subject.

But it is not really infinite, is it?

We have been looking at the edge case: what infinite attention does to institutions, but in reality, of course, there is no such thing as infinite attention. Attention is produced by the use of tokens. What this means is that in an attention arms race the actor with the most tokens wins. The challenge for the local councils is that it has to respond to the sum total token consumption of the citizens that employ AI to object to planning decisions, so they now have to consume tokens to generate attention that can manage the attention injected into the system.

It is easy to see how this goes wrong.

The public actor reacts by deploying AI to generate more attention to meet citizen attention, and citizens deploy more attention in turn to counter that counter and…

The resulting arms race leads to a weird outcome where citizen engagement through augmented attention creates massive costs for the public sector to deal with in a token race where the outcomes still may not be better for either party.

And it is not only in the case of local councils that this can happen - any public sector surface is vulnerable to attention overload attacks, and in some cases private sector surfaces are equally vulnerable.

Think about the use of artificial intelligence in tax enforcement. In theory a tax authority would be rational to deploy as many tokens as gives them more revenue as long as the cost of the tokens is less than the newly generated revenue. Authorities could scrutinize every transaction in a multinational company, aggregate the results, recalculate taxes and levy both extra taxes and penalties on the basis of massive evidence that it would take years for a tax department to sort through.

Unless, of course, these tax departments also deployed artificial intelligence to match the regulatory attention and ensure that for every agent scrutinizing a transaction there was one ready to respond. The resulting token race will probably lead to a grid locked situation where neither party wins over time - and an enormous consumption of the energy needed for those tokens as the only real result.

Some companies can afford this, and they can in principle set up a game theoretic cease fire where each party knows that the cost of the tokens as deployed and defended against will not be greater than the benefit. This new theory of mutually assured depletion (of tokens) creates a kind of weird local cold war logic that leaves smaller companies, without this ability to defend as the only logical targets.

But what then happens with the legitimacy of the tax system?

If equity is measured in tokens, the overall legitimacy of any public sector system will slowly erode to the point of meaninglessness.

So what do we do?

The ability to deploy attention, through agents and ultimately based on your available tokens now emerges as a new kind of power, attentive power, that we need to understand more deeply in order to manage it.

Requiring human elements and reducing the possibilities to deploy artificial attention is of course one option, but it remains to be seen how efficient that will be. Requiring that you turn up with your own planning objection and book a meeting with another human being will be unfair in another way: it means that only those with the time will be able to engage. On the other hand maybe that is how democracy overall stays relevant; that we choose to build it with real, authentic human attention?

Other options include limiting the attention / tokens you can deploy in certain systems - but that seems hard to police. Overall the detection of when tokens have been consumed is not easy. Perhaps the first step is just to be aware of when we see these token races accelerate, and then look at the specific case and ask if there is a way in which we can benefit from the added attention, without risking ending up in a race to token depletion.

Ultimately, underneath all of this, lies a deeper realization that we should come back to at a later point, and that is that power, now, quite clearly is access to energy.

Thanks for reading,

Nicklas

See Smyth, Chris “English councils to trial Google AI tool to speed up planning decisions” Financial Times, 2026-05-02 https://www.ft.com/content/91ce4475-d325-4d65-babb-4214996bc0f6?syn-25a6b1a6=1

See https://www.nmh-p.de/wp-content/uploads/Simon-H.A._Designing-organizations-for-an-information-rich-world.pdf