Unpredictable patterns #153: The social design of cognition

Weil's principle, the return of illumination and cognitive liquidity

Dear reader,

Easter is almost over, and I finally had some time to sit down and write out a few thoughts about cognition - a term that I think is much better than intelligence - and how it changes with the arrival of AI. I hope you enjoy it!

Dimensions of cognition

When we explore the impact of artificial intelligence on cognition we often talk about substitution. Where we used to have human cognition we will now get artificial cognition - but we seem to assume that the nature of cognition itself will not change. Now, increasingly, we are realizing that this may not be true, and we are starting to see the first tentative discussions about the long term trajectory of cognition itself. Let’s look at a few of the interesting dimensions of this debate, and how they might evolve as cognition starts to change.

Complexity

The first is complexity. Back in 2022 I wrote an essay called ”Nature loves to hide”, in which I argued that the dream of technology as a rationalizing agent was collapsing in complexity, as technology increasingly becomes indistinguishable from nature:

“If we trace the trajectory of this trend we find a point in time where we have to revise our idea of knowledge – since we will, in some sense, know things we cannot explain. Where competence and comprehension are decoupled, knowledge and explanation also are divorced.”

This is not a new trend: cognition becomes complex and specialized with progress. Most of us cannot explain how our fridge works, but up until now there has been someone that can, and we have been confident that if we spent the time we would be able to understand it as well.

Understanding was within our grasp.

That increasingly is not true. The way this happens is interesting: first the number of people who understand something decreases, and then we pass a limit beyond which no one understand a particular technology or phenomenon. We can still use it, and it can still increase our capabilities - but we do not understand it. No one does.

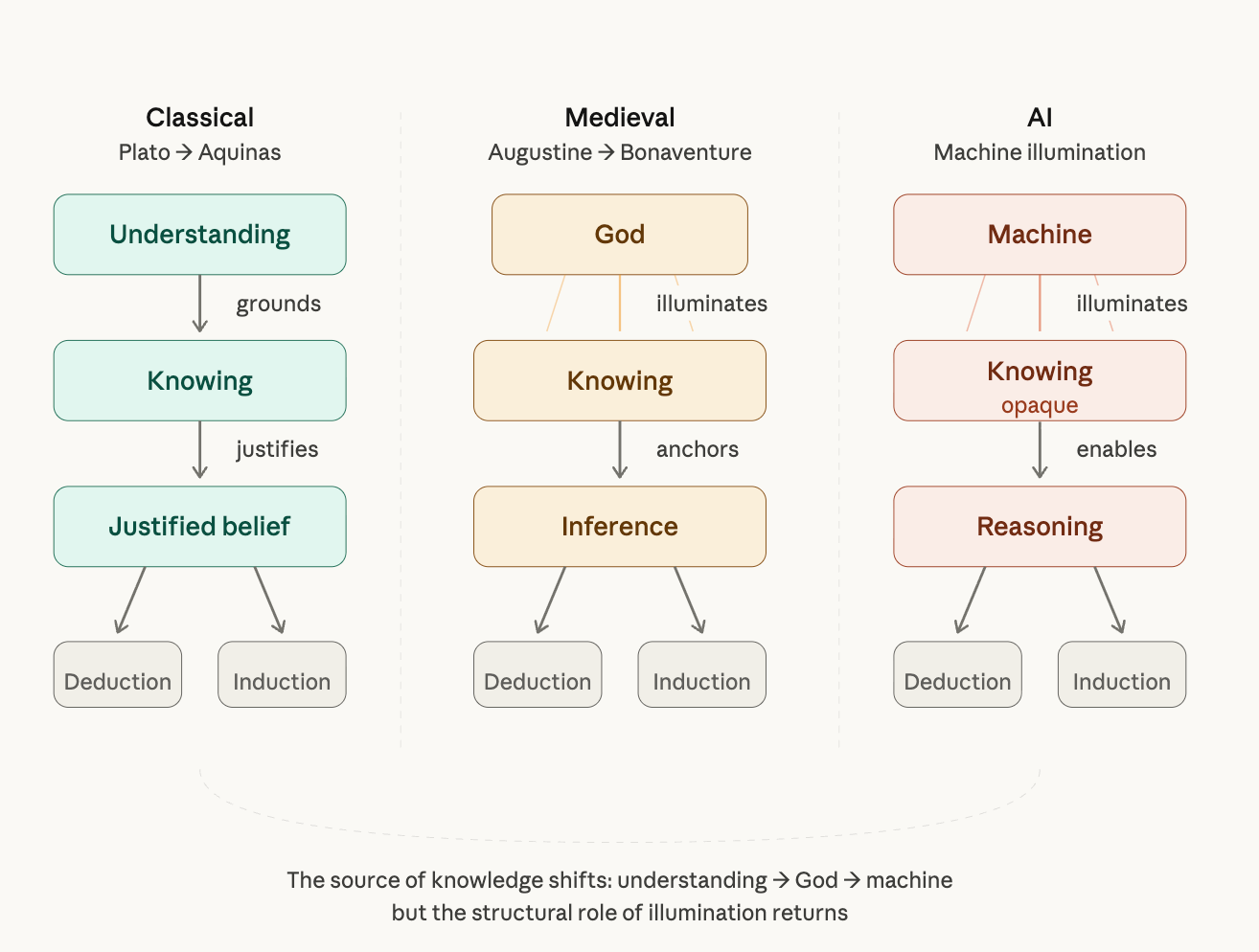

In science this will mean that we will need a way to accommodate a state of affairs in which we “know” something but do not understand it. If knowledge previously, under Plato, was “true justified belief” we now need to accept that one method of justification is to point at the machine as the origin of the knowledge we claim to have.

It almost seems as if we need to accept a new kind of axiom here, where the source of the knowledge legitimizes the knowledge itself.

This is not a new model in epistemology. In medieval philosophy the key methods of arriving at knowledge where not just deduction and induction, but there was also a third form of knowledge production: illumination.

Illumination was thought to anchor both inference and syllogism, and without it knowledge would be insufficient. A large part of the scholastic revolution under Aquinas was to suggest that maybe illumination was not needed for understanding, after all - or not in the direct sense suggest by earlier Christian neo-platonic writers.

We seem, however, to be building illumination into future knowledge infrastructures. This time it is not God who is the source of that illumination, but the machine. We can reason on the basis of the illuminated truths the machine has produced, but we need to take those truths as given. Artificial intelligence, in this scenario, is a kind of illumination epistemology engine.

Complexity, then, changes cognition fundamentally in that it forces us to decouple knowing and understanding in ways that take us back to medieval models of knowledge.

Liquidity

The second dimension we should explore is liquidity. AI is making cognition much more liquid, in the sense that it is no longer stuck in a single materiality. Take a supersimple example: NotebookLM.

NotebookLM takes text, video and other inputs and transforms them alchemically to podcasts, mindmaps and presentations. The thinking that went into a paper can be distilled into an infographic or a video with a few clicks. This new liquidity means that we can translate almost any kind of cognition from one form to another.

If the challenge with complexity is opacity, the challenge with liquidity is different: it is about transformation.

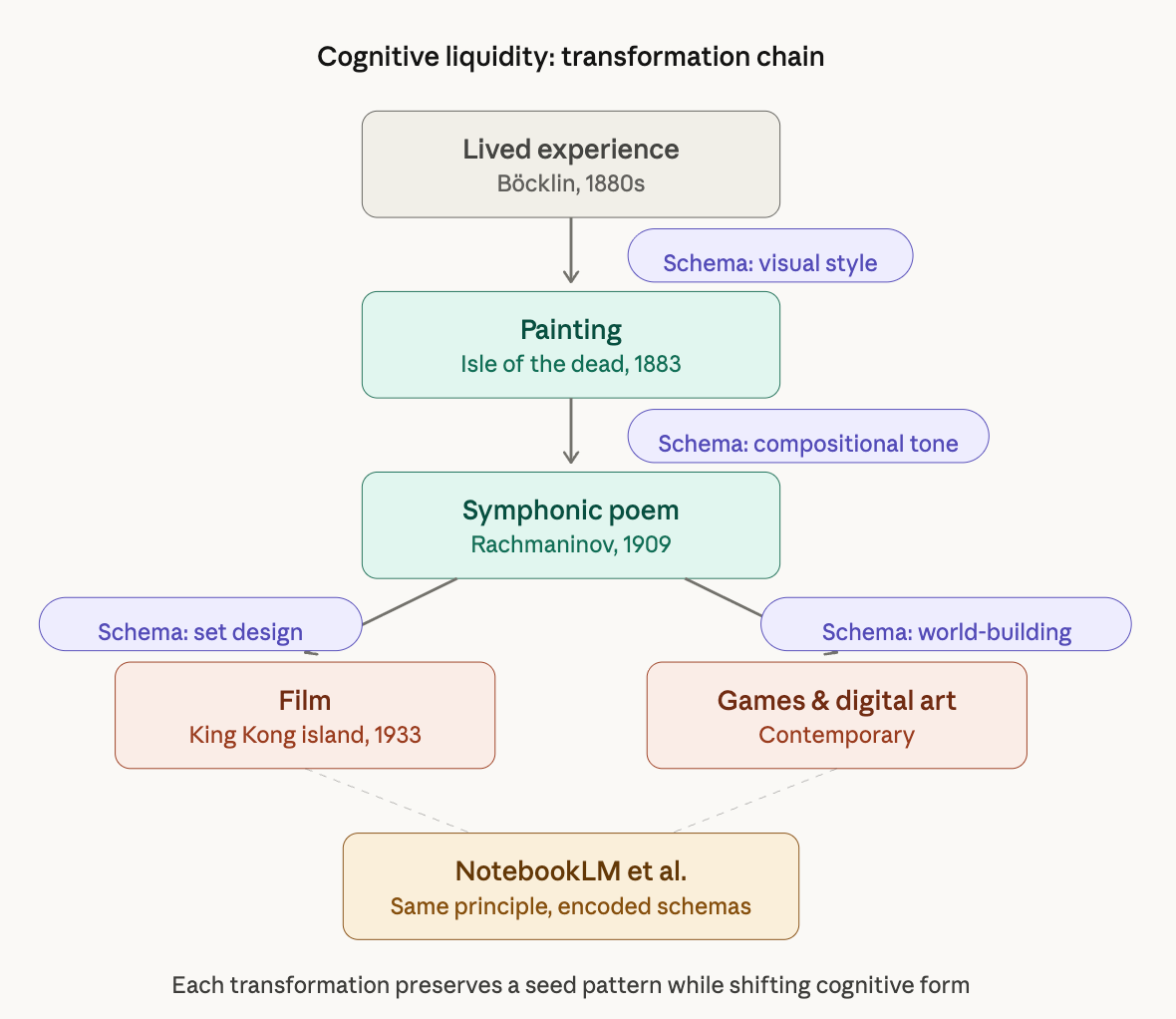

Transforming a cognitive artifact from one phase state into another requires that we first establish clear ideas about the ways in which that transformation is performed. Such transformations need to follow some kind of transformative schema. We see this most clearly when we look at existing transformations of various kinds.

Take the Isle of the dead, the painting by Arnold Böcklin. Painted in 1883 this painting itself encapsulates principles of transformation or “style” in the sense that the painting transforms Böcklin’s experience into colours, shapes, expressions and themes in the painting.

The painting was later seen - in a black and white rendering - by Rachmaninov, who decided to compose a symphonic poem piece on the basis of the painting. Here a new set of transformations are brought to bear as the composer turns the painting into music. Exactly how is not that simple to say, of course - some hear the rowing of the oars or swelling of the sea at the beginning of the piece, others feel the sorrow of the pines against the white marble in the flutes - yet someone may center on the dread throughout the piece as death has claimed the island as its own.

The painting then has lived on in a kind of intertextuality. The island where King Kong resides was reputedly modeled on it, and it occurs in computer games and modern movies as well - partially transformed into these new artforms.

The transformation of art into different artforms is the most extreme example of cognitive transformation, and the schema here is interesting to think through: it needs to anchor in some kind of form of life that we all share in order for it to resonate as a transformation but the latitude we give artists as they say they are transforming other works of art is great.

In contrast, then, the transformations done by NotebookLM may seem tame. But the thing I want to highlight is that the principle is the same. One cognitive artifact is transformed into another and so the underlying cognition, the underlying thinking, is transformed and becomes more and more liquid.

The fact that the transformational schemas underlying NotebookLM work so well is in itself interesting: it suggests that these schemas may be encoded deep in the data sets used to train the models doing the transformations - and maybe it is possible to build versions of NotebookLM that take paintings and produce music.

As we do so we need to recognize that these transformational schemas are expressed as cognitive styles. This is true for the presentations and infographics in NotebookLM, and the choice and use of styles will ultimately determine the limits of our cognitive liquidity.

Maybe one form of cognition that become more important is uncovering, designing and exploring such styles. This would be something akin to the rules and frameworks of the famous Glassbead-game designed by Hermann Hesse - a game in which all human knowledge is united in complex games that express underlying patterns.

This liquidity will challenge our conventional understanding of what constitutes a work, of course. Works, original enough to carry and survive transformations of this kind, will be more like seeds for a creative pattern that can be explored by many different authors, or players, of that pattern - perhaps also showing us that creativity is more of a collaborative thing than something reserved for the lone romantic genius.

Arguably, the recognition of the importance of creative IP-universes is part of this transformation, where creativity plays out in a universe across a number of different cognitive artifacts, with corona effects like fan fiction surrounding these clusters of creative cognition.

Liquidity changes cognition in that it allows for cognition to explore more forms and artifacts than ever before, forcing us to recognize and examine principles of transformation more closely than before and paying attention to the underlying creative patterns we play with - the rise of a kind of meta-cognitive creativity.

Configuration

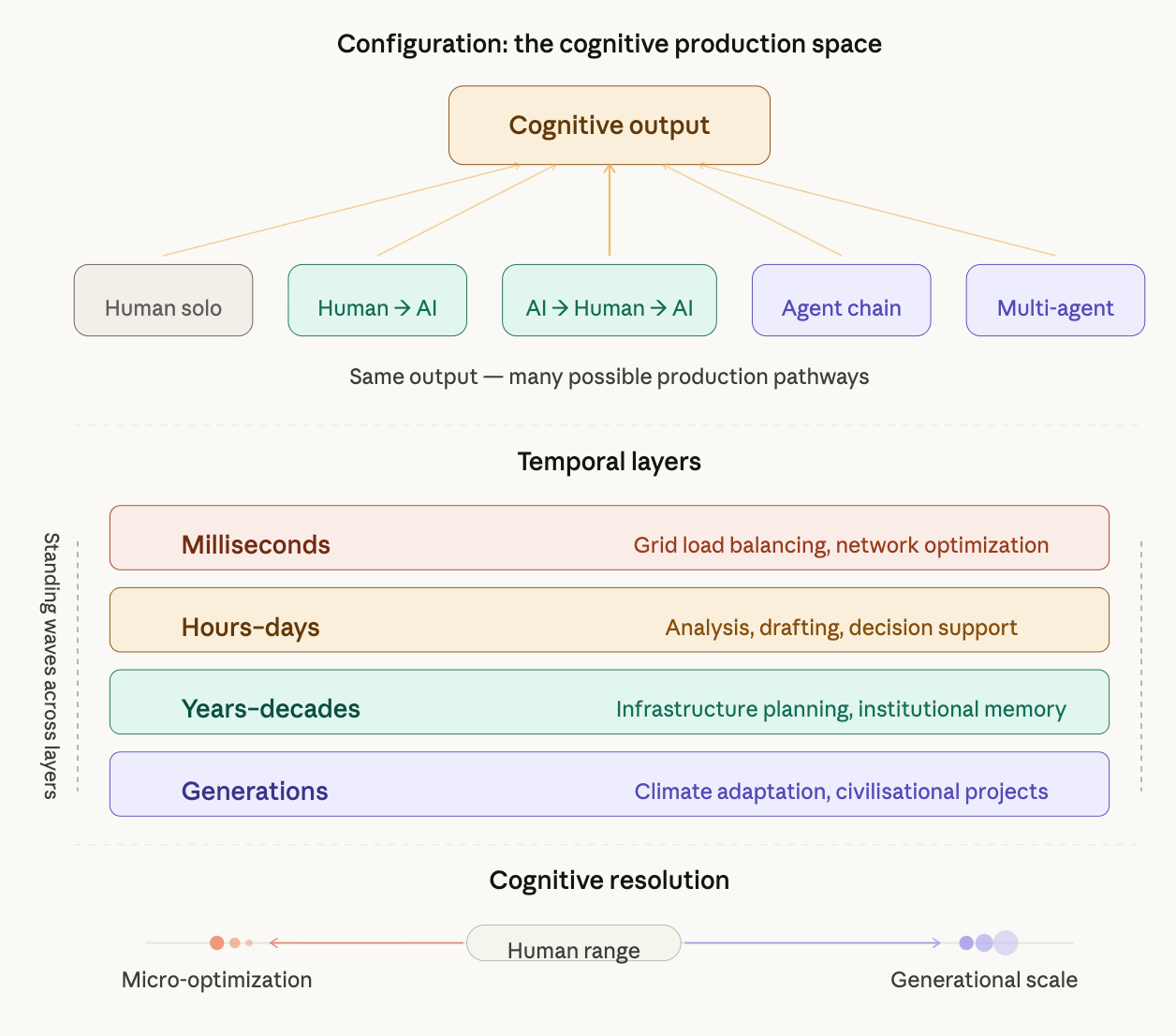

The third dimension is configuration. AI increases the number of possible cognitive configurations in a way that allows for cognition to happen in many more ways than before, in both simple ways (human-to-agent, agent-to-agent, and so on) and in more complex ways as cognition can now be configured in different temporal layers as well. The number of possible configurations that can produce different kinds of cognition now increases, creating a greater probability both of co-occuring innovations and the establishment of new standing waves of cognition, creating more volatility in the cognitive space.

This change also allows for configurations of cognition that exist at various resolutions. It becomes possible to think about the very small or the very big in ways that we could not access before. Optimizing systems at the edges of where human cognition is not fine-grained enough for energy gains or justice becomes as possible as thinking about generational projects across time.

Human cognition has a natural resolution in space, a pixel size below which we cannot usefully think. Hybrid cognitive networks do not need to have this, of course and could think about things literally too small for us to consider: energy losses in networks that require both access to different timescales and different cognitive resolutions. There is also an upper limit to our thinking in time and space, as is evidenced by the slow failure of infrastructure across our civilisation. New configurations of cognition could think on infrastructure scales quite naturally, enabling us to build a much more robust society overall.

Configurations are never neutral, however, as recognized by terms like “cognitive offloading” or even “cognitive surrender”. Configuring cognition so that we do as little of it as possible is completely doable, but probably not what we want. The long term impact of cognitive configurations will impact the key resource we should be managing more carefully: human agency.

We can even formulate a first version of a principle here, let’s call it Weil’s principle after Simone Weil.

Weil’s principle: Cognition should be configured so as to maximize human agency.1

This principle can guide both implementations of AI in organizations and regulation of AI overall, and serves to remind us that no cognitive configuration is neutral. It differs from the idea of a human in the loop in that it emphasizes agency, not presence. Weil’s insistence on human attention as the key component in a society is key here: configure cognition so that the human nodes in the network are attending, not just present.

The social design of cognitive systems

The challenge, here, then becomes one of designing cognitive systems across all these different dimensions in ways that help us solve problems, advance our knowledge and lift more people out of poverty while preserving human agency. This is no simple task - but focusing on the ways in which cognition changes, rather than just arguing about who does it (the AI or we) seems more worthwhile to me.

We can approach this social design challenge in different ways. We can, for example, resist the increasing complexity and liquidity, while restricting the wealth of possible configurations of cognition. Or we can try to navigate within them as constraints. I suspect a sound approach will require both these approaches - constantly asking how we want to think together.

This is a nice extension of the fundamental political question as stated by Aristotle: politics is about how we live together. What we need is a new politics of how we think together, not just with each-other, but with new cognitive systems of various kinds.

Thanks for reading,

Nicklas

Now, maximizing any property of a system is never easy - and this principle needs working out across many different planes. One is time. Do we maximize human agency over a short time horizon or over a longer one? Another is the question of whose agency. Individual agency or collective agency? In these two sub questions alone we see complex, political questioning — and that is intentional. If we start from the principle, we may well have a good discussion that allows for us to approach the question of designing cognition well in a structured way.