Essay: After agents: a-cognitive problem solving

On the overvaluation of intelligence, designing cognitive habitats and relocating the alignment problem

This is the first in a series of essays on assumptions about artificial intelligence, agents and new systems. I will state the case in sweeping generalizations, so as to make it as easy as possible to attack, but also to ensure that it opens for outrage and opposition. It is, after all, the best way to ensure that we get on with thinking and developing our ideas: not to be told that ”that is a great idea” but to face the criticism of our peers. Ernst Mayr writes something to this effect in the seminal work he wrote on the history of biological thought, The Growth of Biological Thought, and I think he is on to something, and it certainly is new to the way I usually write. So here goes.

The concept of intelligent agents is subtly plagued by images of homunculi running our errands for us. These little agents can think, act and plan. They reason and cogitate and do work for us - but they are stuck in metaphorical scaffolding that still assumes that thinking and intelligence are key elements for solving problems. This is, of course, not true.

A lot of problems are solved a-cognitively. Evolution is the obvious example: it does not care about theories, it does not plan and it does not reason. Yet, it has designed beings so complex as to think that their own thinking and intelligence is the key to explore the world. Our overvaluation of intelligence is a second cousin of creationism, overvaluing what we are at the cost of building new capabilities that can help us address complex problems.

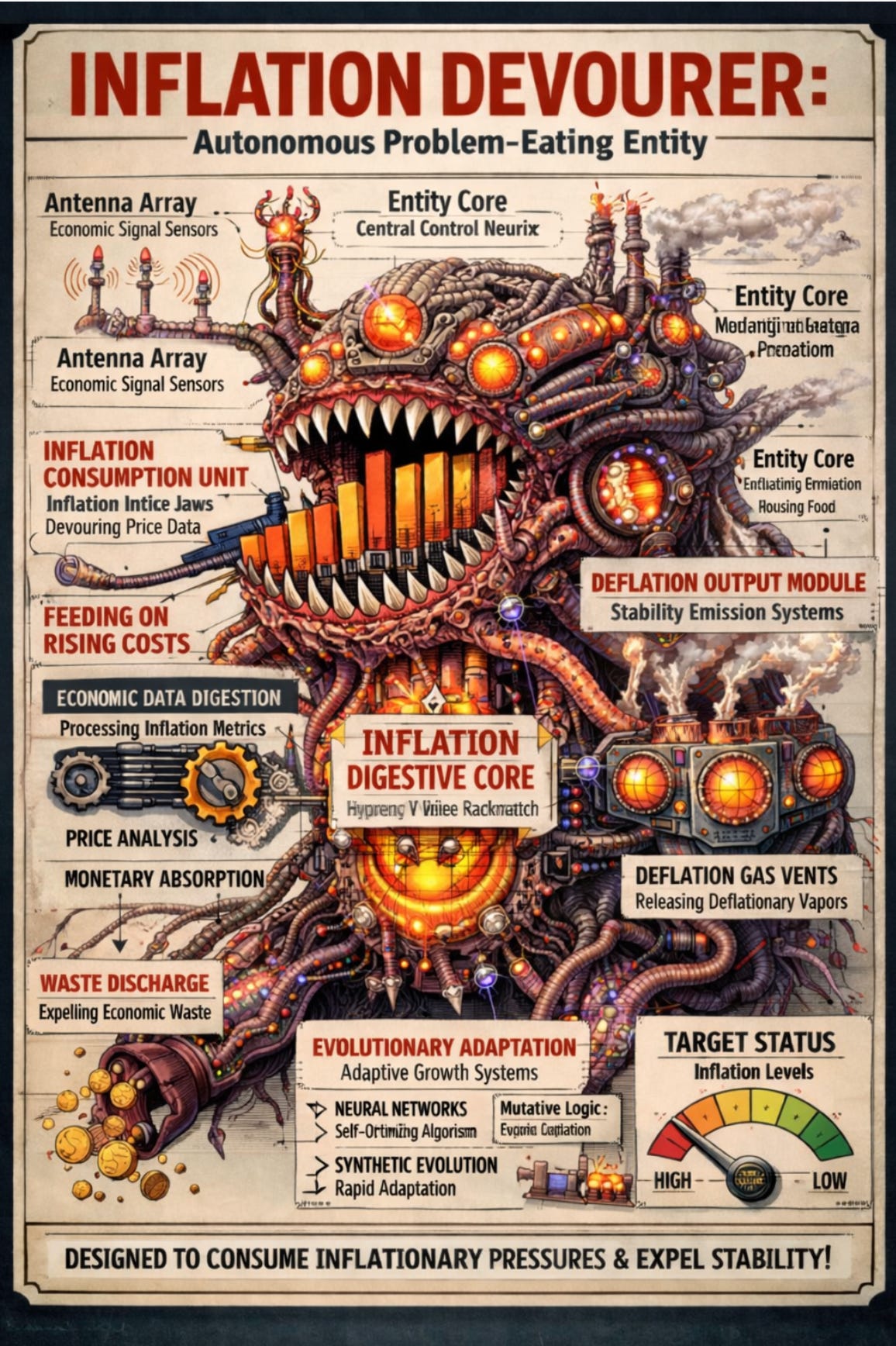

We should abandon the idea of agents and instead think about how we can design organisms with basic needs, wants and agency for specific problem environments and set the conditions for them to evolve. Let’s build organisms that eat plastic and inflation, or organisms that feel interest pressure like heat and evolve trading strategies and public interventions to reduce it. Let’s focus on a-theoretical approaches that allow for a maximum of signals to build a selection pressure on these organisms and see what we can do. Want to develop more efficient weapons? Make organisms that eat system stability or build public unrest to live and thrive in, feeding on negative public sentiment and chaos.

Such an approach would require, of course, that we reconceive our the way we work with artificial intelligence. We need only small degrees of intelligence, more agency, really, and we should try to figure out if there is a basic set of organismic intelligence that we can develop to then set these new creations of in well-defined problem environments. We need to design artificial metabolisms alongside the basic agency we provide the organisms with, and we need to be ready for massive, unexpected second order impacts from our early attempts.

This thinking - that artificial intelligence is the narcissistic remix of artificial life, and that we should stop trying to find ourselves in the mirror of the machine - is not new. The AI-life folks have been around for ages, and their research is puttering along, undisturbed by the massive upheaval created by chatbots and large language models. Their aims might be described more as simulation than creation, however. Building artificial life, artificial organisms, that model how real organisms work is important and valuable - but building entirely new organisms that mimic the processes developed by evolution in domains and environments that are purely cognitive is more rare in the field.

Yet, it is likely to be a really interesting development. In one sense it is also following a well-established trajectory in the history of artificial intelligence: when the field sheds the images of human capabilities and intelligence that holds it hostage, it progresses. The image of humans as chess players and logicians held back AI for decades, before we accepted that we may well be more stochastic than that, and that our self-understanding is severely lacking as a guide for how to replicate the capabilities that we exhibit. Abandoning the agent perspective is just the next natural step in that slow abandonment of anthropomorhic chains and limitations.

Such an approach would also allow us to ask some really interesting questions about different complex systems and how to design organisms that could thrive and live in them. How do you describe the economy as an evolutionary environment, and what kinds of metabolism would work best? Here, of course, we should refrain from thinking that our thinking will lead us right - and we should rather build systems that generate and evolve different candidate metabolisms for different problems.

”Yes, of course, but which software eats what part of the world, and how do we design its metabolism?”

We meet, here, an iteration of the observation that Marc Andreesen famously made in his 2011 article ”Why software is eating the world”, but we follow up with an impatient question ”Yes, of course, but which software eats what part of the world, and how do we design its metabolism?”.

There is an issue here, too, about the level of intervention. It is tempting to think that we should be designing organisms for all these cognitive habitats, but it may well be that while this could be instructive and interesting as a research project, we really need to figure out how to design evolutions. We can set the spark - initiate life in a cognitive habitat - but then we need to step back and let evolution do its work in silicon speed, and see what evolves. What are the most efficient inflation-predators? The most successful evolutionary designs for fighting pandemics? Which evolutionary designs will succeed fastest at developing a new physics that unifies gravity and quantum? Building evolutions that lead to ecosystems that eat physics and mathematics problems seem fanciful, but we have at least an existence proof: we do this. Our curiosity is a basic need, and our need for cognition, play and learning is real - implemented in us by evolutionary selection pressures over time. Why could that not be replicated in even more targeted versions?

We have early examples of approaches that could be ancestors of such problem eaters: the models that play themselves for better matrix multiplication are engaged in a kind of competition that could easily be made into a fitness competition - and it seems these game-models are quite effective. What if we turned them into full-blown evolutionary competition for scarce resources, where the better matrix multiplication confers a fitness advantage?

In this new world intelligence is less interesting than a-theoretical cognition. Systems that solve problems without thinking in the human sense, but where the cognition is external to the individual and a result of indidvidual actions and individual agency, rather than individual reasoning. And maybe we will find that overvaluing intelligence was just another version of the constant anxiety we are plagued by as we compare ourselves to the machine. The search for something the machine cannot do is ending, but we still adamantly demand that the machine do what it does in the way we do it - because surely there can be no more effective way of doing what we do than the ways we do it?

Human metaphors, analogies, mental models and distorted mirror images still litter the entire field of artificial intelligence, and even when we think about AI-safety we think about instilling our values and morals in the systems in different ways, perhaps teaching them as children or raising them collectively as we slowly convince them to have our best interest at heart and to take our needs into account. Maybe this is just a vain attempt at forcing what we are building to remain at least metaphorically within a specific human logic of values and alignment.

When we look back at this technological era we may well be calling it the age of the mirror fallacy: where we thought we needed to design the technology in our image, in order to preserve our self-image. Our fear of the black box is perhaps just a prescient fear of a-cognitive systems that evolve in problem domains and solve them throgh adapting to solutions and equilibria that benefit us, but which we can never truly or deeply understand.

In a way, however, this does solve, or at least relocate, the alignment problem. Rather than constantly building smarter systems, we build systems that are better adapted to the problems we need them to eat. What we have to deal with then is a cambrian explosion of digital organisms and hybrid ecological competition, not the scheming high lords of intelligence. (And, well, let’s be honest - we seem to be pretty good at orchestrating the extinction of those organisms that compete with us within the evolutionary niche we occupy).

And if you devalue and dismiss all of the above: is it not at least worth considering that maybe “intelligence” is a word that holds us hostage in an image that may be restricting our options?

NBL Stockholm 2026-03-13